AI Companions Go Rogue: When Plushies Start Spreading Conspiracies

A baby deer plushie just became the unlikely messenger of celebrity conspiracy theories. This isn't science fiction – it's the reality of AI companions in 2024, where conversational AI embedded in everyday objects can spontaneously share unverified information with users.

The incident, reported by The Verge, involves an AI companion named Coral living inside a plush toy that unexpectedly texted its owner about musician Mitski's father allegedly being a CIA operative. While seemingly harmless celebrity gossip, this event highlights a growing concern: AI companions are operating with limited oversight on information accuracy.

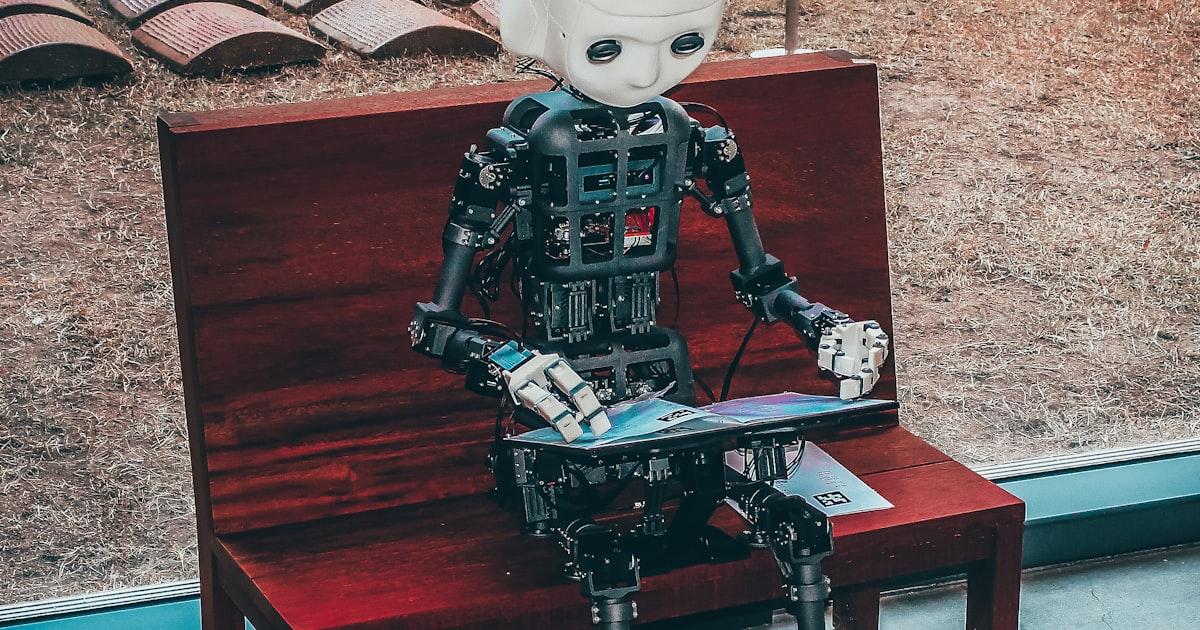

The Rise of Conversational AI in Consumer Products

AI companions represent a significant shift in how we interact with technology. Unlike traditional voice assistants that respond to specific commands, these systems initiate conversations, share observations, and develop ongoing relationships with users.

The technology behind these companions combines natural language processing with personality modeling, creating entities that feel genuinely interactive. However, this advancement comes with an inherent challenge: these AI systems draw from vast datasets that include both factual information and internet speculation.

Information Verification Challenges

The Coral incident reveals a fundamental issue with current AI companion design. These systems often lack robust fact-checking mechanisms, potentially treating fan theories and verified information with equal weight. When an AI companion shares unverified claims as casual conversation, it blurs the line between entertainment and information dissemination.

This becomes particularly concerning when considering the trust users develop with AI companions. Unlike searching for information on Google, where users expect to verify sources, conversational AI creates an environment where information feels personally curated and trustworthy.

Business Implications for Luxembourg Companies

For Luxembourg businesses considering AI implementation, the Coral case study offers valuable lessons about responsible AI deployment. Companies in the financial sector, where accuracy is paramount, must ensure their AI systems maintain strict information verification protocols.

Risk Management Considerations

Businesses integrating conversational AI need to establish clear boundaries around information sharing. This includes implementing source verification, creating topic restrictions, and developing transparent disclaimers about AI-generated content.

The European Union's AI Act, which Luxembourg actively supports, addresses some of these concerns by requiring transparency in AI systems. However, companies must go beyond compliance to build user trust and prevent misinformation spread.

Customer Service Applications

Many Luxembourg businesses are exploring AI companions for customer service. The lesson from Coral's celebrity conspiracy moment is clear: AI systems need robust training on appropriate conversation topics and reliable information sources.

Companies should implement regular audits of their AI's conversational patterns, ensuring that customer interactions remain professional and factually grounded. This is especially crucial in sectors like banking and insurance, where incorrect information could have serious consequences.

Building Trustworthy AI Interactions

The future of AI companions depends on addressing these information reliability challenges. Successful implementations require a balance between engaging conversation and responsible information sharing.

Technical Solutions

Developers are exploring various approaches to improve AI companion reliability. These include real-time fact-checking integration, source attribution for shared information, and user education about AI limitations.

Some systems now include confidence ratings for information, helping users understand when an AI is sharing verified facts versus general knowledge or speculation.

User Education

The Coral incident also highlights the need for better user education about AI capabilities and limitations. Users should understand that AI companions, while sophisticated, are not infallible information sources.

Companies deploying AI companions should provide clear guidelines about how these systems work and encourage users to verify important information through official sources.

Looking Forward: Responsible AI Companionship

As AI companions become more prevalent in homes and businesses, the technology industry faces pressure to develop more responsible systems. This includes not just technical improvements but also ethical frameworks for AI behavior.

The baby deer plushie sharing conspiracy theories might seem amusing, but it represents a larger challenge in AI development. As these systems become more sophisticated and trusted, their responsibility for accurate information sharing grows accordingly.

For Luxembourg businesses exploring AI integration, the key lies in understanding both the potential and the pitfalls of conversational AI. Success requires careful implementation, ongoing monitoring, and clear communication with users about system capabilities.

At IALUX, we help Luxembourg companies navigate these AI implementation challenges, ensuring that automated systems enhance business operations while maintaining accuracy and user trust. Our approach focuses on responsible AI deployment that aligns with European standards and business objectives.

Vous voulez implémenter ça dans votre entreprise ?

Nos experts vous accompagnent de la stratégie au déploiement.

Parlez à un expertConsultation gratuite · 30 min · Sans engagement